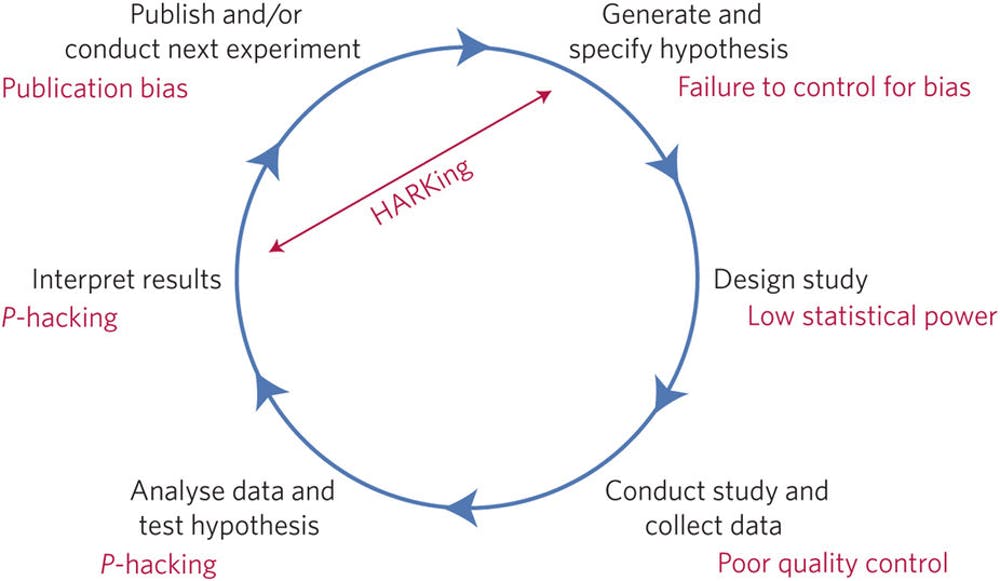

A depiction of the idealized model of scientific discovery, with threats to the integrity of the results included in red. From Munafò et al., 2017.

In 2016 Nature published a survey of scientists regarding their experiences with reproducibility. They found that 38% reported they felt there was a slight problem with reproducibility in their field, while 52% reported a significant problem. In medicine respondents felt, on average, that about half of the published literature was reliable. This survey helped fuel the narrative that there is a reproducibility “crisis” in science. They go on to discuss the potential causes and cures, which I will get to below.

However, not everyone accepted that narrative. Publishing in PNAS in 2018, Daniele Fanelli reports:

This article provides an overview of recent evidence suggesting that this narrative is mistaken, and argues that a narrative of epochal changes and empowerment of scientists would be more accurate, inspiring, and compelling.

Everyone seems to agree that there a problem of unreliable research in every field of science, but disagree on the scope and impact. Fanelli argues that the problem is minor, laying out the evidence for this position:

Recent evidence from metaresearch studies suggests that issues with research integrity and reproducibility, while certainly important phenomena that need to be addressed, are: (i) not distorting the majority of the literature, in science as a whole as well as within any given discipline; (ii) heterogeneously distributed across subfields in any given area, which suggests that generalizations are unjustified; and (iii) not growing, as the crisis narrative would presuppose.

On the other end of the spectrum is Dorothy Bishop, who in 2019, again in Nature, stated:

I strongly identify with the movement to make the practice of science more robust. It’s not that my contemporaries are unconcerned about doing science well; it’s just that many of them don’t seem to recognize that there are serious problems with current practices. By contrast, I think that, in two decades, we will look back on the past 60 years — particularly in biomedical science — and marvel at how much time and money has been wasted on flawed research.

This is an issue we have explored at length here at SBM, and so I am very familiar with the underlying literature, having summarized it many times. My take is that both Fanelli and Bishop are correct – reproducibility and robustness in science is a serious problem, it’s just not a fatal problem.

When I lecture about this issue myself I have to be careful to strike this nuance. When you lay out all the documented pitfalls in science it can give the false impression that science is “broken” or in “crisis”. Not necessarily. Rather, I think science is messy; it takes a winding and inefficient path, but does usually get to its destination eventually. Results that are consistent with reality do have an inherent advantage within science over results that are not in accord with reality, and this advantage does tend to sort them out over time.

The narrative that I prefer is that science is hard and if we want to maximize scientific advance (the results we get for our investment), then we need to optimize the technology of science itself. Methodological problems give us temporary false positives (and sometimes false negatives – but there is a huge bias toward the false positive) and increase the time and resources it takes to get to a reliable answer.

But increased rigor and care cost time and resources also. We could avoid false results in science if we slowed it down to a crawl, but that would defeat the purpose. What is that purpose? That’s actually a good place to start. It is not, I would argue, to minimize error in science, it is to optimize advance, defining advance as producing highly reliable (which includes being correct) conclusions about how the universe works.

In order to discover new things we need to take risks, ask questions, and be willing to be wrong a lot. We need to throw a lot of stuff up against the wall and see what sticks, and then confirm and reconfirm our results. Somewhere there is a sweet spot, where scientific methods are efficient enough to ask a lot of questions, but careful enough to produce reliable results and not go down too many rabbit holes.

Like Fanelli I do not think we need to embrace a “crisis” narrative, and I don’t think science is broken. But like Bishop I think we are currently not at the sweet spot, and need to push methodology toward the rigorous end of the spectrum to get to a better balance than where we are generally today.

There are two other layers here worth mentioning. The first is that not all scientific research has to strike the same balance of efficiency and rigor. We can (and do) take a staged approach. We have preliminary research which is down and dirty, optimized for efficiency with the minimal rigor necessary to produce worthwhile results. Research at this end of the spectrum is designed only to inform later research, not to form a basis for firm conclusions. At the confirmatory end of the spectrum research prioritizes extreme methodological rigor, trying as best as we can to answer the question – is this phenomenon really real?

What is important is that we understand where we are with any given question along this spectrum, and not confuse preliminary research with confirmatory research. I don’t think this is generally a problem within mainstream science (scientists get this), but I do think it is a problem with science reporting to the public. I also think it is a systemic problem within those disciplines that I would consider on the fringe, or pseudoscientific.

Take acupuncture, for example. Normally any particular scientific question will progress from more preliminary to more rigorous research methodology. As the research gets more and more rigorous, we begin to see the real effect size or reality of the phenomenon. When we get to the most rigorous studies, we can start to form reliable conclusions. Acupuncture research, to a degree, progressed toward more rigorous studies. The problem (for acupuncturists) is that these studies are generally negative.

Acupuncturists, rather than coming to the correct scientific conclusion that acupuncture does not work, instead started doing less rigorous research. They retreated to more preliminary designs, which they disguised as “pragmatic” studies, and just kept cranking out false positive preliminary research. That is where acupuncture research now lives.

This also relates to the second layer worth mentioning – some sciences are applied. Medicine, for example, is not just engaged in the abstract process of understanding biology. We need answers that determine how we actually treat patients in the real world. In applied sciences we need to have, I argue, a nuanced and sophisticated understanding of the nature of scientific research and its relationship to practice. When are results reliable enough to affect our practice? Where should we draw the line? This, of course, is the focus of most of the articles here on SBM.

Finally, while there is disagreement over the extent and implications of the replication problem, there is broad agreement on the causes and some of the fixes. Everyone agrees that we need to minimize fraud and outright error. Bishop colorfully designates the main problems as the:

…four horsemen of the reproducibility apocalypse: publication bias, low statistical power, P-value hacking and HARKing (hypothesizing after results are known).

Every researcher needs to have a deep understanding of these phenomena and how to avoid them. The original Nature survey asked scientists about what potential fixes they think would be most worthwhile. Here they are (from most to least popular): Better understanding of statistics, better mentoring, more robust design, better teaching, more within-lab replication, incentives for better practice, incentives for formal reproduction, more external lab validation, more time for mentoring, journals enforcing standards, and more time checking notebooks.

It’s hard to argue that these are not all good things. The only objection raised is that these cost more time and resources, but I do think the evidence supports a cultural shift within science toward more rigor and methods for minimizing error. Every researcher, for example, needs to know exactly what P-hacking is and how to avoid it.

It also seems that journals need to value replications more, and negative results more. Bishop points out, for example, that a negative study is often referred to as a “failed” study. This is completely wrong – if the study was rigorous and achieved a reliable result, it did not fail. It answered the question, the answer just happens to be no. A failed study is one that is a false positive or negative because of poor methodology.

At the very least there needs to be better education of scientists and applied science practitioners about all these issues. I routinely lecture to my colleagues about SBM and always take the opportunity to ask basic questions, like “Who knows what p-hacking is? Or how about funnel plots?” I often get mostly blank stares with just a few hands going up. I do think we’re making progress, and the fact that mainstream science journals are publishing opinion pieces about this issue is encouraging. But we have a ways to go – particularly as we get farther and farther out to the fringes. That is where so-called “alternative medicine” lives and why it is such a focus of SBM.

Reproducibility Follow Up

Let’s explore dueling narratives about the reproducibility “crisis.”