Decision aids are one tool to ensure both physicians and patients understand the risks and benefits of tests and treatments.

The era of medical paternalism has largely disappeared. Few of us are willing to permit health decisions to be made on our behalf, without our input. Today the goal is “shared decision making,” which describes a collaborative process that takes into account a health professional’s medical knowledge and advice, but also a patient’s own preferences and wishes. Truly shared decision-making includes an explicit consideration of the evidence base and a consideration of a treatment’s (or test’s) expected benefits and potential harms. Decisions may vary, as patient values can vary. There isn’t always one “right” answer.

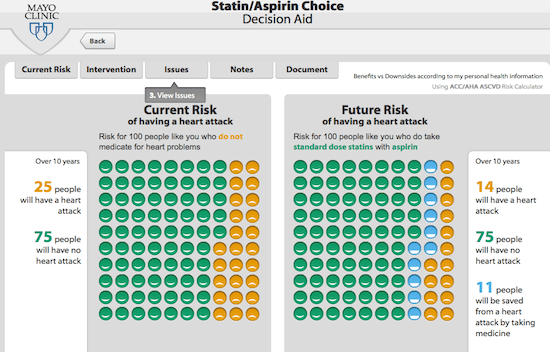

A friend recently asked me for my opinions of statins. This fellow is in his early fifties, is active, and doesn’t smoke. His physician had brought it up, but didn’t push him in one direction or another. As this particular type of decision is so common, there are an abundance of decision tools out there that use the evidence from studies of cardiovascular risk, allow you to enter your own risk factors, and then generate a summary of the expected benefits and harms of a statin (like this one.) Using the tool, and a discussion with his physician, he decided against taking a statin. The potential for benefit was still small, and he didn’t want the daily hassle of medication, the potential side effects, and the prescription cost. This was the right decision – for him, and it was a great example of shared decision-making that was guided by a tool that incorporates the best clinical evidence. Unfortunately, too few of our health care decisions are accompanied by direct clinical evidence and effective decision aids.

In order to make health decisions that reflect our own preferences, we need to understand the benefits and risks of any test, treatment or screening test that is offered to us. The foundation underlying these decisions is an honest, unbiased evaluation of the scientific evidence. Science is the best tool we have to objectively evaluate new treatments, and understand their risks and benefits. Back in 2015 I wrote about a systematic review that assessed patient expectation of benefits and harms of treatments, tests, and screening tests. It looked at 36 studies: 16 that looked at treatments, and 20 that looked at screening. The sample was small but the findings were consistent: patients tend to underestimate the harms and overestimate benefits of a medical decision. But patient decisions can be heavily influenced by expert opinion. So do experts have accurate understandings of risk and benefit? That’s what this new study sought to determine.

This new paper is entitled ” Clinicians’ Expectations of the Benefits and Harms of Treatments, Screening, and Tests.” Published in JAMA Internal Medicine, it’s written by Tammy C Hoffmann and Chris Del Mar of the Centre for Research in Evidence-Based Practice at Bond University in Australia. They were the authors of the systematic review of patient expectations, so this is a nice companion document. As context, this is a systematic review, which is a review and analysis of previously published research. By methodically selecting and analyzing trials, the intent of a systematic review is to give a perspective on the totality of the evidence base on a particular subject. Ultimately systematic reviews are only as good as the evidence they include. As we’ve seen with alternative medicine, systematic reviews can lead to some questionable findings.

This was a systematic review of all studies that assessed physician expectations of the benefits and/or harms of any treatment, test, or screening test. Any study in which participants provided a quantitative estimate was included. The authors did a comprehensive search of four databases and then, using responsive articles, attempted to identify additional articles (via references and index words). There were two authors, so most of the work appears to have been done in parallel. If studies provided an answer about benefits or harms, the authors calculated the proportion of participants that responded correctly or over/underestimated the answer.

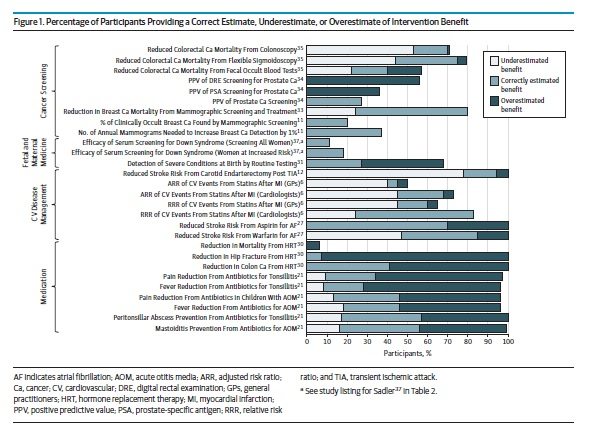

The authors found a total of 48 eligible studies published between 1981 and 2015, encompassing over 13,000 clinicians. Twenty studies examined treatments, 8 addressed tests or screening, and 20 addressed medical imaging. Across the studies, 181 outcomes were assessed, 23% assessing benefits and 69% assessing harm expectations (8% were neutral). Overall, physicians correctly estimated 11% of the benefit expectations and 13% of the harm expectations. Most participants overestimated benefit and underestimated harm.

Expectations of benefit

Benefit expectation were assessed in 15 studies, which included studies such as risk reductions from statins, or reduction in breast cancer mortality due to mammography. The findings are a bit complicated to sort out as the primary data is reported in different ways, which sometimes limited a calculation of over/underestimation. In studies with overestimation/underestimation data, 50% or more of the participants overestimate benefit for 7 outcomes (32%) and underestimated benefit for 2 outcomes (9%).

Expectations of harm

Estimates of harm were compared with correct estimates in 26 studies (69 outcomes). Most participants (>50%) correctly estimated harm for only 9 outcomes, with most underestimating harm for 20 outcomes (34%) and overestimating harm for 3 outcomes (5%).

The paper goes on to break out the findings by intervention type, which I won’t describe. Instead, here’s Figure 1 which shows the overall summary of benefits (Figure 2, showing harms, is here).

Is there a “therapeutic illusion”?

This is the most comprehensive summary to date of physician expectations. Clinicians rarely estimated benefits and harms accurately, and tended to underestimate harms and overestimate benefits. Over-optimism has the potential to push physicians to offer, and patients to accept, more interventions than might be necessary or desirable. And that may be what we’re seeing. Certainly initiatives like Choosing Wisely are expressly designed to guide patients and clinicians into re-evaluating low-value or ineffective care.

The reasons for these beliefs are unclear, and given the diversity of studies included, there’s almost certainly many factors that contribute to an evidence gap. It could reflect the sheer volume of clinical evidence that exists, or it could be challenges in translating clinical trial results to individual assessments of risk and benefit. It’s not clear, and while the authors of this paper summarize the possibilities, ultimately, “more research is required.”

So recognizing that clinicians are likely overestimating benefit and underestimating harm, how do we fix the problem? Shared decision-making is going to be biased towards testing and treatment if both patient and clinician have unfounded optimism. Decision support tools, when grounded in good evidence, like the one I described above, are a start. More generally, clinicians need access to unbiased, accurate evidence summaries that describe benefits and harms, and help them translate this to patients accurately. Ultimately if we want to improve the efficiency and effectiveness of care, we need to focus on both health professional and patient expectations. Without addressing it, our health professionals and our health systems will continue to offer sub-optimal, and even wasteful or harmful, medicine.