I have written about Protandim several times. In May, 2017, I said that while there was no evidence from human studies that it improved meaningful clinical health outcomes, Protandim was probably safe to try. I based my opinion about its safety on a human trial in athletes. That trial was negative, but I relied on its report of signs/symptoms to conclude that side effects were not significantly more likely with Protandim than with placebo. That was unwise of me. A research scientist who wishes to remain anonymous recently emailed me to point out some serious flaws in that study. The authors fudged or carelessly miscalculated the data, which actually show that side effects are almost twice as common with Protandim as with placebo. And there were a number of other irregularities.

What is Protandim?

Protandim is a mixture of milk thistle, Bacopa extract, Ashwagandha, green tea extract, and turmeric extract. It is supposed to indirectly increase antioxidant activity. But there have now been three double-blind, randomized, placebo-controlled trials in humans, all showing that it did not increase antioxidant activity.

Why do people use it?

I don’t get it. Why are people taking a supplement that has been shown not to work? I guess it’s a matter of preferring hope to evidence. Apparently customers are willing to disregard the negative evidence from human trials and prefer to rely on animal studies, in vitro studies, and one non-randomized, non-controlled human trial carried out by the company in 2006 that found that TBARS (thiobarbituric acid-reacting substances) declined by 40% with Protandim. They are assuming that those findings are valid, although they have never been replicated. They are further assuming that decreased TBARS means they will enjoy better health, longer life, weight loss, less chronic disease, or some other unproven benefit. There is no evidence for any of that, and the company recently was warned by the FDA that it must stop suggesting that Protandim could prevent cancer, diabetes, cardiovascular disease, and Alzheimer’s disease. Numerous statements on the company website constituted misbranding.

Side effects

The company website says they don’t expect any side effects for the typical consumer, but some individuals may have natural allergic responses to one or more of the ingredients, manifested as stomach ache, diarrhea, vomiting, headache, or rash on the hands and feet. This does not describe a typical allergic response to a medication, which is manifested by hives, itching, generalized rashes not restricted to hands and feet, fever, swelling, wheezing, etc. The Natural Medicines Comprehensive Database reports non-allergic side effects, notably gastrointestinal, for each of the ingredients in Protandim. The only human study to look at side effects was the one I cited, and the company has no basis for its claim that any side effects are merely allergic responses.

The most recent human study “suggested that Protandim may enhance proteostatic mechanisms of skeletal muscle contractile proteins after 6 weeks of milk protein feeding in older adults.” It was a small study that only looked at lab tests and did not report any kind of clinical improvement and did not mention side effects at all.

Another concern is contamination. There has already been one recall due to metal contamination.

Data presentation and analysis were faulty

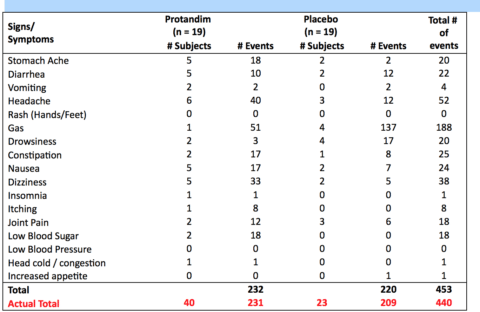

Table 4, from the study, is reproduced here with corrections. It is interesting in several respects:

- Errors in addition:

- They listed 232 events in the Protandim group. There were 231.

- They listed 220 events in the placebo group. There were 209.

- They listed 453 total events; there were 440

- Two signs/symptoms listed in the table (rash, low blood pressure) didn’t occur in either group. There is no reason for them to be listed.

- The column on the right lists the total number of events in both groups. That information is meaningless.

- Only one subject in the Protandim group experienced gas, but reported it a total of 51 times, which would tend to skew the results.

- No patients in the placebo group reported vomiting, but 2 vomiting events are listed (!?)

- The 19 patients in the Protandim group reported 40 signs and symptoms, nearly twice as many as the 19 patients in the placebo group (23). I would guess that is a statistically significant difference, but they made no attempt at statistical analysis and didn’t even mention the difference in their write-up.

- There were roughly twice as many reports in the Protandim group for stomach ache (5 vs 2), diarrhea (5 vs 2), vomiting (2 vs 0), headache (6 vs 3), nausea (5 vs 2), dizziness (5 vs 2), and low blood sugar (2 vs 0).

- There were many more events reported in the Protandim group for stomach ache (19 vs 2), headache (40 vs 12), constipation (17 vs 8), nausea (17 vs 7), dizziness (33 vs 5), itching (8 vs 0), joint pain (12 vs 6), and low blood sugar (18 vs 0).

- There is no attempt at statistical analysis.

- The total number of events is not as meaningful as the number of patients reporting side effects. This is not the way side effects are usually tabulated in research studies.

- We could calculate averages from the data: 23 side effects were reported in the placebo group of 19 subjects, for an average of 1.2; 40 were reported in the Protandim group of 19 subjects, for an average of 2.1. But averages can obscure details. It would be more interesting to know how many side effects were reported by each individual. What if there was an outlier in the Protandim group who was a hypochondriac and who reported all 15 side effects? That would drastically skew the results. And it would be interesting to know if any side effects were reported by the principal investigator who was also a subject.

- The numbers from such a small study (19 subjects in each group) are insufficient to determine the actual incidence of side effects attributable to Protandim, but it certainly suggests that they are more common than the company website indicates.

Other irregularities

- Protocol violation. In the Funding Statement, the authors indicate that when the trial failed its primary endpoint, they included data from a post-hoc analysis on superoxide dismutase (SOD) that was not part of the original trial design and therefore was a protocol violation.

- P-hacking. They analyzed SOD in a subset of male patients over the age of 35, which amounted to 8 subjects age 35-46. This is an example of p-hacking/fishing/cherry-picking at its worst.

- The principal investigator was also a subject.

- Safety signals were ignored. Basing an analysis of adverse events solely on a comparison of the total number of all events is unheard of. My correspondent said, “Imagine if a study used an analysis like that where the raw data showed a 10-fold higher rate of lymphoma vs placebo but it got washed out because of a higher rate of some trivial adverse event like constipation in the placebo group.”

- The norm is to call attention to any adverse events that occur in the treatment group at a rate that exceeds a certain threshold (often as low as 10-15%) or exceeds that of the placebo group by a certain ratio (double for example). The authors failed to do that.

Conclusion: reason for concern

Is there a risk of side effects with Protandim? The only study that has attempted to answer that question was carelessly done, full of flaws, and can’t be trusted. I was wrong to opine that Protandim would probably be safe to try based on that one flawed study: we can’t really know that it is safe, and there is certainly reason to be concerned about possible adverse effects. Most of the reported events were not serious, but it’s always possible that a larger, longer study might find more serious problems. There are herbal remedies that were widely used for centuries before we realized they were killing people; Aristolochia is a prime example. And since Protandim is not known to have any clinical benefits, and has been shown not to even fulfill its stated purpose of increasing antioxidant levels, it would be hard to justify trying it.

I’m happy to admit it when I am wrong, because it means I have learned something. In this case, there were several lessons: don’t assume that tables are correct or that researchers know how to add, don’t assume that peer reviewers and editors will identify obvious errors.