[Ed. Note: Sharp-eyed readers might note that this post seems familiar. That’s because, due to circumstances and general craziness, I updated and expanded a post that recently appeared elsewhere. Blogging will return to normal next week.]

Social media has become a cornerstone in the strategy of antivaxers to promote their message. Of course, antivaxers are not unique in their adoption of Twitter, Facebook, Periscope, Snapchat, and the like to spread their message and promote their links far and wide. After all, if you think of most antivaccine misinformation as another form of “fake news,” it makes perfect sense. As a result, scientists have become interested in characterizing how antivaccine misinformation spreads and who is doing the spreading. Indeed, early on in the course of the whole “CDC Whistleblower” conspiracy theory, which was spawned in August 2014 when antivaccine activist Brian Hooker teamed with antivaccine icon Andrew Wakefield to promote the claim that a CDC scientist, William Thompson, had revealed that a major study of the safety of the MMR vaccine that found that it was not associated with autism had “covered up” evidence that the vaccine was associated with a significant increase in autism risk, antivaxers showed a lack of skill with Twitter. Unfortunately, that’s changed.

Amusingly, Hooker and Wakefield later accused the CDC of scientific fraud, to the explosion of irony meters everywhere, but the patent ridiculousness and bad epidemiology of the “CDC whistleblower” conspiracy theory didn’t stop the publication of a book, movie, and even fake news about how, days after Donald Trump took office, the FBI had raided the CDC based on Thompson’s allegations. Unfortunately, the movie featuring the CDC whistleblower conspiracy theory, VAXXED, despite being propaganda so over-the-top that it would make Leni Riefenstahl blush, has been successful for its makers, Andrew Wakefield and Del Bigtree, allowing them to tour the country for screenings with Q&As afterward that let them spread the gospel of Andy.

Which brings us to the current study.

Smith & Graham: The Facebook study

Some of you might remember that in the usually slow news week between Christmas and New Year’s Day, there was a brief flurry of articles about a study on the antivaccine movement online. This time, unlike previous such studies that I’ve discussed, it wasn’t about Twitter, which amplifies antivaccine messages, in some cases due to the use of bots. In contrast, this study by Naomi Smith, Lecturer in Sociology in the School of Arts, Humanities and Social Sciences at Federation University Australia (Gippsland), and Timothy Graham, Postdoctoral Research Fellow at the Australian National University, Australia, with a joint appointment in the Research School of Social Science and the Research School of Computer Science, was about antivaccine activity on Facebook and entitled “Mapping the anti-vaccination movement on Facebook.”

Before I get into the study itself, just let me just vent briefly about a pet peeve I had about the media coverage of the article. One of the findings of the study was—surprise! surprise!—that most antivaxers on Facebook are women. This is about as startling to anyone who’s perused antivaccine Facebook groups (as I have) as the sun rising in the morning and setting at night. The reason is almost certainly very simple: Women still provide the vast majority of direct child care and make most of the decisions about the medical care of their children, as the authors point out. Yet what sorts of headlines did I see? These:

- Online anti-vaxxers are part of a “highly feminized movement.”

- ‘Vast Majority’ of Online Anti-Vaxxers Are Women.

- Women More Active In Online Anti-Vaxxers’ Groups: Study.

My reading of the study was that, while this was one major finding of the study, it is not the main one by any means and certainly not the most important, probably because it’s about as close to a “Well, duh!” finding as you can imagine. Worse, the headlines could provide fodder for antivaxers to claim misogyny. It’s been a common (and, sadly, at times not entirely unjustified) complaint among antivaxers, such as when pro-science advocates harp on Jenny McCarthy’s history as a Playboy Playmate to dismiss her, although far more often it’s vastly overblown. Far better was a headline like “Study Shows What’s Really Going on in Online Anti-Vax Groups,” because it does.

What did the authors do?

So what did the study find? First, let’s look at how it was done. The authors chose Facebook because it is the most popular social network, and they chose a purposive sample of six large public antivaccine Facebook pages. Basically, a purposive sample is not a random sample. Sometimes also called judgmental, selective, or subjective sampling, a purposive sample is a sample selected based on characteristics of a population and the objective of the study. Such a sample can be quite useful in research situations when researchers need to examine a targeted sample and where proportionality is not the main concern. The authors explain their rationale:

We have chosen to focus on Facebook as it is still the most popular social network site in the world and has the broadest user base. A purposive sample of six Facebook pages was selected by triangulating data (Denzin, 1970) from both Australia and North America, providing two important, albeit considerably different, sites of current anti-vaccination activity (see Table 1). Purposive sampling also helped us identify sites that were relevant to the anti-vaccination movement, and provided interesting and important insights on the anti-vaccination movement. In addition, conducting large-scale data analysis (detailed below) the sites were also reviewed before data collection to ensure the timeline for data collection (14 April 2013 and 14 April 2016) would be analytically useful (Smith & O’Malley, 2016).

The authors ended up settling on six public Facebook pages, with the number of Likes as of December 3, 2015 in parentheses:

- Fans of the AVN (9,811)

- Dr. Tenpenny on vaccines (173,410)

- Great mothers (and others) questioning vaccines (17,592)

- No vaccines Australia (3,108)

- Age of autism (12,959)

- RAGE against the vaccines (14,611)

I was actually surprised at how few Likes most of these pages had, while at the same time being disturbed at how many Likes Sherri Tenpenny’s page has. In any case, I have at one time or another perused most of these pages, with the exception of RAGE Against the Vaccines, which, oddly enough, I had never heard of before.

This also brings up another issue that was pointed out to me elsewhere and that I should have noticed right away. One of the biggest antivaccine Facebook groups, the National Vaccine Information Center (NVIC), which currently has over 201,000 Likes, was not in the list above. It’s a Facebook page roughly on par in size with that of Sherri Tenpenny’s Facebook page, which currently has over 222,000 Likes. The NVIC, recall, was founded by Barbara Loe Fisher, the grande dame of the modern iteration of the antivaccine movement, and is one of the oldest antivaccine groups out there. Her name and the NVIC have frequently come up in discussions of antivaccine pseudoscience here on SBM. So, if there’s one thing that disappoints me about this paper, it’s that how the purposive sample was chosen is not very clearly described, and I find it rather hard to understand how one could leave out such a large Facebook group, which, depressingly, has four times as many Likes as the SBM Facebook page (which you should totally like now).

What did they find?

My quibble about the selection of pages for analysis aside, the authors used a range of analytical methods to answer two questions:

- What are the networked properties of anti-vaccination communities on Facebook, including their size, shape, and connectedness?

- What types of anti-vaccination discourses are present within these communities?

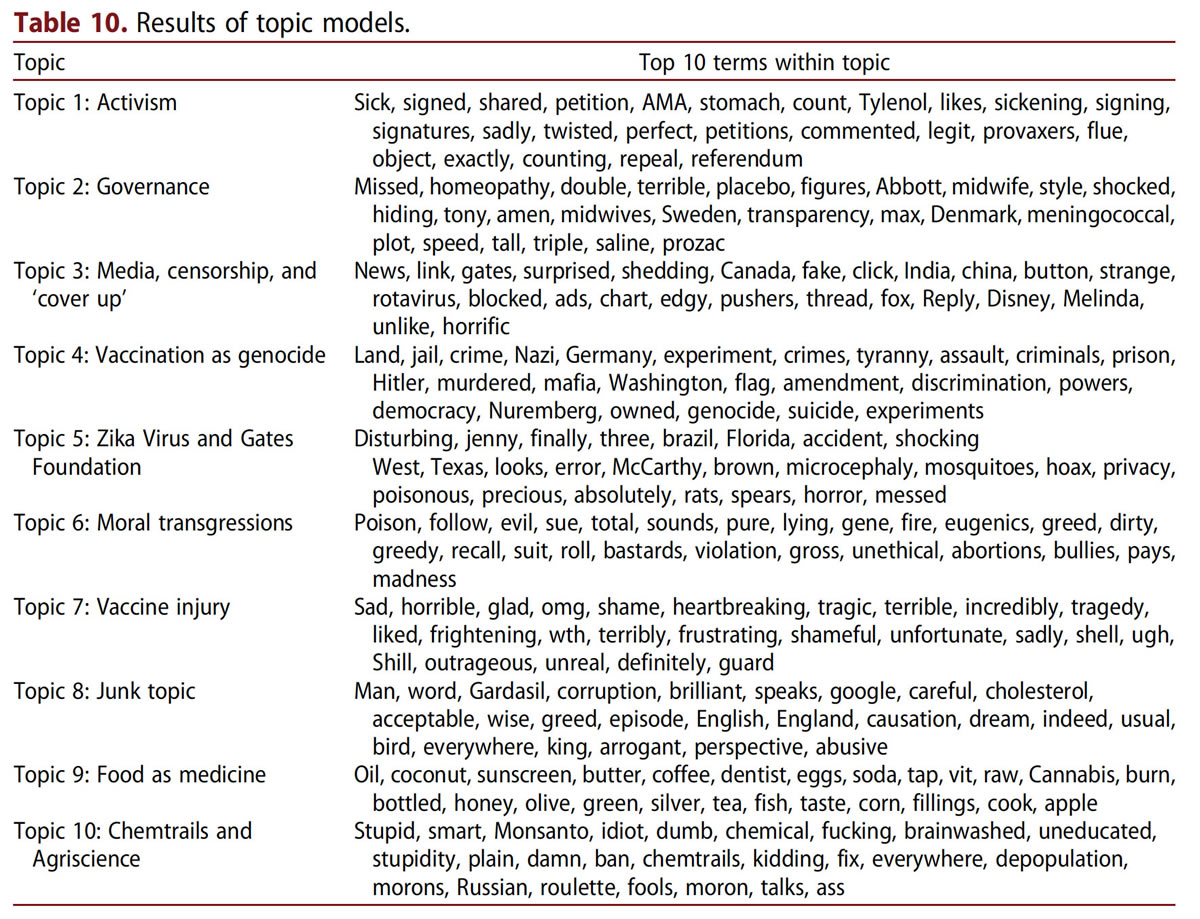

Let’s take a look at the second question first. The authors did topic modeling on the complete set of comment text to determine what topics are likely to be driving antivaccine discourse using a method known as Latent Dirichlet Allocation (LDA). Conceptually, in natural language processing, LDA is a generative statistical model that represents a set of text documents in terms of a mixture of topics that generate words with particular probabilities. The exampled used to illustrate the concept was this comment: “Kill one person, go to jail, kill thousands and pay a fine. Where is the justice?” which might be largely generated from a topic labelled Crime and Justice, associated with words such as criminal, abuse, murder, crime, jail, justice, experiment, fraud, kill.

Antivaccine topics on Facebook

I must admit that I was rather surprised that vaccine injury didn’t feature more prominently, although it didn’t surprise me at all that conspiracy theories like chemtrails and quack topics were represented at a high level. This finding also did not surprise me:

The qualitatively labelled topics point towards several key pre-occupations of the antivaccination communities, constitutive of the Facebook pages examined. Primarily, the results of the topic modelling suggest that the anti-vaccination community is very concerned with the institutional arrangements that are perceived to be perpetuating the harmful practice of vaccination. The sentiment across all topic models (with the exception of Topic 9) is quite negative in tone, suggesting that users of the anti-vaccination pages feel not only morally outraged about the practice of vaccination, but structurally oppressed by seemingly tyrannical and conspiratorial government and media. Topics 2, 3, 4, 5, 7, and 10 all appear to accord with conspiracy-style beliefs in which the government and media are key actors in underplaying, denying, or perpetuating the perceived harms caused by vaccinations. These include: media cover-up or denial of the extent of vaccination injury and death (Topic 3); Bill Gates’ involvement in the spread of Zika virus within Brazil and beyond its borders (Topic 5); and chemtrails (Topic 10), which is a belief that the vapour trails emitted by aircraft are chemical compounds sprayed by the government and designed to subdue the population and/or control the weather (Oliver & Wood, 2014).

The prevalence of conspiracy-style thinking is unsurprising. Survey research undertaken by the Cooperative Congressional Election Studies indicates that 55% of respondents in 2011 agreed with at least two of the conspiracy theories they were presented with. This suggests that conspiracy-style thinking is relatively prevalent in the general population, and perhaps particularly pronounced among those who hold anti-vaccination beliefs (Oliver & Wood, 2014).

If conspiracy-style thinking is highly prevalent in the general population (and the rise of fake news is a definite indicator that it is), then the antivaccine movement represents conspiracy-style thinking on steroids.

Regarding the first question, the authors found that antivaccine networks are an “effective hub” for distributing antivaccine misinformation designed to encourage grass roots resistance to current vaccination policies and this:

Our analysis suggests that anti-vaccination networks, despite their relative size and high levels of activity, are relatively sparse or ‘loose’, that is, they do not necessarily function as close-knit communities of support with participants interacting with each other in a sustained way over time. However, this does not necessarily mean that anti-vaccination networks provide no support. Simply participating in a community of like-minded others may reinforce and cement anti-vaccination beliefs. Our data also suggest that participants are moderately active across several anti-vaccination Facebook pages, suggesting that users’ activity on anti-vaccination is more than just a product of Facebook’s recommender system. Liking and actively commenting on a number of anti-vaccination pages across Facebook suggests that those who are involved in this network may develop a pattern of activity and involvement across multiple pages, creating a filter bubble effect that reinforces anti-vaccination sentiment and practice. However, it is difficult to discern from the data to what extent the filter bubble is created through users’ own agency and activity, and how much is influenced by the algorithmic structure of Facebook, whereby Facebook actively targets users with content they would be more likely to click on and relate to.

So we have a loosely knit network of antivaccine Facebook pages, although one thing I have to wonder about this study is whether the sample size of pages was large enough to make such sweeping generalizations. My misgiving about this aside, several observations did ring true to me as someone who’s lurked in these forums. For instance, the authors found that posts and memes were highly shared, with people frequently “sharing” posts on their own Facebook pages or on their friends’ pages. Overall, there were more than 2 million shares across the six groups studied during the two-year period, which means that an antivaccine Facebook page’s reach goes far beyond just the members of the group who frequent that page. What would be interesting to me is the question of which social network is more efficient at increasing traffic to links to antivaccine articles, Facebook or Twitter. My guess is that it would be FB, based on personal experience. Remember that time I got into a minor Twitter kerfuffle last year with William Shatner, who has over one million followers on Twitter? He actually linked to one of my posts on my not-so-super-secret other blog, and I was shocked that the bump in traffic that I received was minor at best. On the other hand, looking at traffic sources when I have a traffic spike often reveals FB as the source.

Another aspect of the networks antivaccine FB pages exhibit what the authors refer to as “small world” networks. Small world networks have two characteristics that allow message to move through the network effectively. First, in these networks small groups of nodes are highly clustered and interconnected. The second characteristic is that large groups of nodes in the networks are sparsely interconnected. Such networks are robust and resist damage. Specifically, randomly removing nodes from such a network will have little effect on the effectiveness and dynamics of the network. If one node shuts down, the network continues to function, barely noticing.

Finally, let’s circle back to the observation that women dominate antivaccine FB pages. Here’s what the authors say about it:

The gender composition of anti-vaccination movement reflects dominant cultural understandings of parenting. That is, that the parenting and care of children is primarily a maternal concern. Women are still more likely to stay at home and care for children (Medved, 2016), and this care includes making decisions about healthcare choices. Historically, vaccination was seen to be ‘a mother’s question’ (Durbach, 2005, p. 60), women’s maternal instincts were to the privileged as forms of knowledge as mothers were argued to be best placed to tell if their children were healthy or not. In the contemporary anti-vaccination movement, our analysis suggests that anti-vaccination, is now, more than ever, ‘a mother’s question’. The anti-vaccination movement is now primarily lead by women. Notably, one of the most popular anti-vaccination pages on Facebook ‘Vaccine Info’ is run by Dr Sherri Tenpenny. Given the gendered nature of participants on anti-vaccination pages, we can conclude that the anti-vaccination movement is a significantly ‘feminised’ social phenomena, although the issue it addresses is not gender specific.

This all rings pretty true, and it certainly is true that antivaccine pseudoscience is the pseudoscience that transcends many boundaries, particularly political. It is not surprising that it also transcends gender boundaries. In any case, there’s little doubt that the antivaccine movement glorifies “maternal instinct, with mothers of autistic children frequently claiming special maternal knowledge as a rationale to dismiss science that does not support their belief that vaccines caused their child’s autism.

The other finding of this study that rings true is the moral outrage that dominates antivaccine groups. I’ve noted and documented that this sort of outrage not infrequently manifests itself in references to the Holocaust, with comparisons of vaccines with mass poisonings and likening pro-vaccine advocates to Nazis. Another form I’ve noticed this moral outrage taking is a fantasy of ultimate vindication, in which “they” are forced to admit that antivaxers were right all along. This is sometimes even combined with fantasies of retribution, in which visions of punishing the “Nazis” who made their children autistic with “toxic vaccines” (or at least demanding their unconditional surrender). It’s how “they” view “us,” as evil enemies. It’s why they game and abuse reporting algorithms to trick Facebook into silencing pro-vaccine voices.

Sadly, I can’t disagree with the authors’ conclusion:

This ‘righteous indignation’, in combination with the network characteristics identified in this study, indicates that anti-vaccination communities are likely to be persistent across time and global in scope as they utilise the affordances of social media platforms to disseminate anti-vaccination information. Concerns about vaccination reveal a community that feels persecuted and is suspicious of mainstream medical practice and government-sanctioned methods to prevent disease. In a generation that has rarely seen these diseases first hand, the risk of adverse reaction seems more immediate and pressing than disease prevention (Davies et al., 2002).

This, too, is a “Well, duh!” conclusion, one that anyone who’s studied the antivaccine movement for any significant length of time will know these observations to be true. That’s not to say that there isn’t value in demonstrating these conclusions by another method, particularly when it sheds light on how antivaccine misinformation spreads. Devising strategies to counter the exaggerated fears of rare adverse reactions in people who’ve never seen the horrors that vaccine-preventable diseases can inflict without heightening the paranoia already so ingrained in the antivaccine movement. The problem in dealing with antivaccine activists on social media, as is the problem in dealing with many groups dedicated to pseudoscience, conspiracy theories, and misinformation, is how to penetrate the bubble of the echo chamber, where misinformation is reinforced and attempts to bring scientific evidence to bear ignored or attacked. That remains one of the great problems of the 21st century.